The rapid increase in digitalisation is creating a major shift in modern society. Advancements made in data science and artificial intelligence bring many new and promising opportunities for the public and private sector alike. Yet, at the same time it sparks concern and debate over ethics. Are all these developments positive? How can they be managed responsibly when introducing them to your business and the people you work for?

Connecting Technology and Society

The question of whether a technology is considered ethical is often presented in binary terms. Does it do good or harm? Should we accept it or reject it? This often leads to big conversations about privacy violations, diminishing labour forces, and bias and discrimination. As a result, these discussions imply that new technologies inherently carry a potential threat – something that should either be allowed or not. However, countering technology against society in ethical debates does not do justice to its interconnectedness. Technology and society have historically always been interconnected; we have developed technology as much as it has developed us.

Ethics, in this sense, should not be about technology being ‘good’ or ‘bad’, but rather about asking ourselves how new technologies can be developed to reflect and protect our shared principles and values. This is a relevant question no matter the scope and impact of the technology – from the use of large-scale and complex fraud detection algorithms used in the public and financial sector, to simple hiring algorithms for businesses. Consequently, ethics is not an external assessor, but rather an internal guide on how to responsibly design, implement, and use new technology in data science projects.

Introducing Guidance Ethics as a Practical Approach

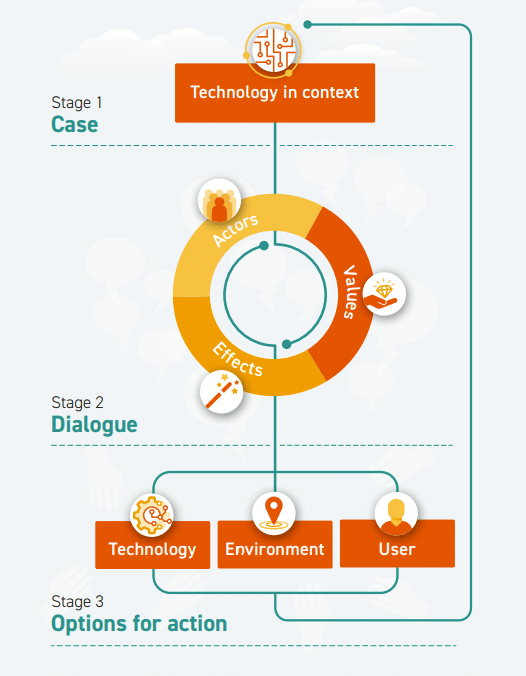

Considering ethics as guiding technology rather than assessing it begs the question: how do you implement this in a practical way? A helpful tool to address this is the Guidance Ethics Approach developed by Prof. Peter-Paul Verbeek (University of Amsterdam) and ECP (Platform for the Information Society). It provides a useful way to discover and understand shared values in introducing new data and AI innovations and translating them into practical actions and designs. This is done through a three-step method.

Firstly, by putting the technology and its application into context and developing a clear, straightforward description of the case, which is understandable to an external audience. Secondly, by creating a structured dialogue between the different stakeholders involved. This gives way to determining the possible (positive and negative) effects and shared values surrounding the use of the technology. Incorporating different perspectives in this step leads to a mutual understanding of the values encompassing the technology.

Finally, the last step is to create a course of action based on the findings in the previous steps. Three types of actions are identified: actions connected to the technology itself (ethics by design), those that influence the environment (ethics in context), and those that determine how it is used (ethics by user). This way, you end up with a clear, specific, and comprehensive action plan that ensures the integration of ethics from design to implementation in your data science projects.

How can ADC help your organisation?

Legal and normative frameworks surrounding machine learning and AI applications are regularly implemented and evolving. In addition to legal compliance, ethics also play an important role in the public debate surrounding data science and AI.

At ADC, Elianne Anemaat (Senior Project Lead, Public Practice) is certified to use the guidance ethics methodology (aanpak begeleidingsethiek) developed by ECP and Professor Peter-Paul Verbeek. We offer this as a proposition to our clients to help them introduce new AI innovations responsibly. This includes considering the underlying values and ethics.

Furthermore, we aim to support organisations in integrating ethics and regulations in the development of data science applications. We do this through low-threshold assessments. These will provide insight into the extent to which an application is compliant and how it can be (further) developed. In addition, we invest in raising awareness about ethics and compliance through workshops and training courses for teams and employees.

Let's shape the future

Would you like to know more about this approach or what Amsterdam Data Collective can do for you? Get in touch with Elianne Anemaat or check our contact page.

What stage is your organisation in on its data-driven journey?

Discover your data maturity stage. Take our Data Maturity Assessment to find out and gain valuable insights into your organisation’s data practices.